Involve yourself in enough WordCamps, meetups and community forums, and you start to notice a trend. The same kind of question is asked over and over. It sounds something like…

What plugin should I install to do {feature}?

WordPress users have the world’s most popular CMS, with 29% of the web under its wing and 53,000+ plugins, yet there is still a confidence gap when choosing plugins and themes. Right now, WordPress does a great job of providing plugin and theme information related to:

- The features

- The support you receive

- User reviews

These are all part of what makes a good plugin or theme, but there is an important piece missing. This piece of information answers the question…

Will the code I’m about to install break or put my site at risk?

A plugin or theme could deliver the exact range of features you need, with great support, and positive reviews, but if the quality of code it contains is poor, you risk the integrity of your website. A single line of good code can unlock potential for you and your website, but bad code can trigger untold calamity.

Unfortunately, the barrier of entry to writing good code is higher than we would like to admit.

The good news

We believe there’s a way to streamline complex web engineering processes around code quality into elegant tools that all WordPress users – from builders to admins to developers – can understand. Tools that empower users to make better decisions on the plugins and themes they install on their sites. Tools that equip developers to easily spot problems and craft a better class of code.

Say ? to Tide.

Tide, a project started here at XWP and supported by Google, Automattic, and WP Engine, aims to equip WordPress users and developers to make better decisions about the plugins and themes they install and build.

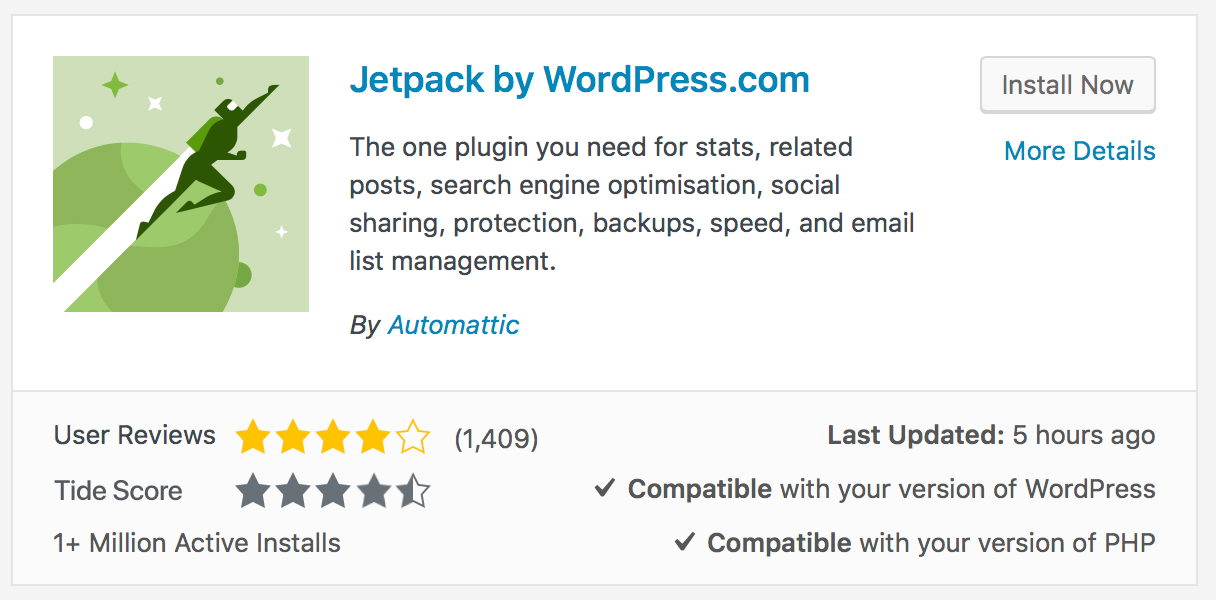

Tide is a service, consisting of an API, Audit Server, and Sync Server, working in tandem to run a series of automated tests against the WordPress.org plugin and theme directories. Through the Tide plugin, the results of these tests are delivered as an aggregated score in the WordPress admin that represents the overall code quality of the plugin or theme. A comprehensive report is generated, equipping developers to better understand how they can increase the quality of their code.

The image below is an early concept of how Tide could introduce the score to the plugin tile in the WordPress admin. How would you present this data? We welcome your feedback.

Tide brings code transparency to the individual, with the collective outcome being an increase of quality across the entire WordPress ecosystem.

Tide at WordCamp US

Alongside our friends at Google, we’ll be sharing Tide with the WordPress community at WordCamp US in just a few weeks. The Tide plugin will be released shortly after. Add your email below if you want to hear more about this project as it develops.

Why this is important to us

At XWP, we are working for a future where the open web is more performant, secure, reliable, and accessible. WordPress plays an undeniably large role in this with the quality of code across the ecosystem setting the stage for either its success or struggles.

What’s next

We know it’ll take some time to make this tool perfect, but we believe in the positive impact good code will have on the WordPress community and the open web. In the same spirit as other open source projects like Let’s Encrypt, Travis CI and WordPress itself, we believe that “a rising tide lifts all boats,” and we want your help in getting this right. If you feel you can contribute, stay tuned as we release further details in the coming weeks.

Really awesome project! I love the idea of the API and report for devs, I’m just not so sure about the stars concept. I don’t see how that is useful for the average user. Should I only install 5 star plugins, or 3 star plugins? What about 2 star plugins? If the advice to regular users is that they should or shouldn’t install a plugin based on some kind of criteria, why not just tell them that, instead of making them guess how many stars is ok for their site? It seams to me RED / GREEN, PASS / FAIL would be much better. Developers could use a detailed report, that’s great, but I think for the user facing it doesn’t make sense. Also, what’s a “TIDE” score? That’s just branding a new word for no reason. Call it “Code Quality: PASS / FAIL” and then people don’t have to lookup what a TIDE score is. My $0.02.

Hi,

A very interesting & useful idea. Can I suggest that star ratings are a little ambiguous & confusing when sat next to another star rating. As you suggest, a user/site owner is concerned about is *risk* – they want to know the potential risk of uploading certain plugins. Risk could be quickly communicated via a simple bar and colour code – on the assumption that better code = less risk.

In addition, a flag system could be useful – so a plugin could be low risk but display a performance impact flag.

The PHP Compatibility determination can be quite a challenge because many plugins may contain code for “backward compatibility” with older versions of WordPress and PHP which are only used as required but most (all?) compatibility checkers for PHP tend to be static code cheers and do not understand the (conditional) context in which some code may be used so you can easily falsely infer that a plugin has compatibility issues because it contains some code that is not supported by the PHP version in use on the site but in fact this code would not be used anyway. Unless a “compatibility” checker can handle this it might end up causing plugin authors to simply remove backward compatibility code to avoid these type of “false positives” and indirectly make their plugins _less_ compatible across the range of PHP versions that users may be legitimately using. If they don’t do this one a false positive on PHP compatibility will put potential users off using the plugin as it is unlikely that users will go to the trouble of asking the plugin developer about this or even reading any pre-prepared statement concerning such false positives.

One of the “metrics” PagePipe uses to judge plugin quality is dividing the number of active installs by the number of all-time downloads. This percentage is the plugin retention rate. A much stronger indicator of quality than mere popularity (installs). Bad retention is anything below 10 percent and good is anything above 30 percent. Excellent is 50 percent. That’s the highest retention we see. The bulk of plugins are tested and dumped by site owners. Sometimes left installed and active but not used.

Also why is the zip file weight never shown. This is frequently a good indicator of bloat. Because plugin speed is our research topic at PagePipe, we always start testing the lightest plugin packages first and work up in weight.

There is no indicator of plugin impact on page speed. Nor is there any reporting of a common deleterious speed infraction we call “site drag.” This is where a plugin loads on every single page and post of your site whether it’s function is used or not. Contact form 7 plugin is a perfect example. It adds about 43 milliseconds globally even on pages where there is no shortcode. This is not reported in read.me files or on the download page.

Indication of what version of PHP is required would be beneficial. For example, P3 Plugin Performance Profiler by GoDaddy only measures *itself* on PHP 7. Yet it has 100,000 active installs.

Staleness or freshness (age) have nothing to do with plugin quality. Either can be good or bad. Many plugins that are 8-years old and abandoned or orphaned are still perfectly good working functional plugins. You can’t just say “old” is bad.

A *fresh* plugin that was just updated hours ago may be riddled with unproven rotten code that crashes your sites. Sometimes it pays to wait and watch for complaints to start being reported. Jumping on “fresh” isn’t always a good practice.

With that said, why can’t we search on *freshness* or *weight* as a parameter in the directory?

Can we even get a listing of the entire directory with a sort by update?

The more popular a plugin is the slower and heavier it is for speed. We see a correlation of this all of the time. Many faster and more competitive plugins usually exist but never have a chance with the present rating system to get noticed. This hampers creativity and increases author abandonment.

This is very exciting and definitely something we need in the WordPress community. I’ve commented/asked questions on several discussions wondering when WordPress would do this kind of project. I’ve been concentrating on performance lately and was surprised how core loads so much JavaScript in the header and how quickly duplicate JavaScript grows with each plugin. Will your project provide guidance on how to access an approved library of JS to reduce redundancy and incompatibility and improve performance?

Will it be “just” an addition to the wp.org repository or will it be available to all devs?

I would like to check my tide score for projects outside of wp.org like github or GitLab.

Big fan of this. I hope, though, that you will provide a means for developers to get feedback on the problems found within their code. Maybe provide it as a paid service for *before* plugins are released in the wild.

Hi Chris. The reports will certainly be made available to developers. These will be presented in a way so that the feedback will nice and easy to action. Our big picture is to increase code quality across the board, so equipping developers is a massive part of this. Thanks for your thoughts. 🙂

This is a very interesting concept. I’ve mentioned this in a tweet and also WPTavern’s posting regarding this, but after looking at the screenshot, you may want to consider green stars instead of grey. Green for people would come across as OK, approved, good, etc.

Andre, thanks for the feedback. You’re not the first to think of this about the stars. 🙂 We’re exploring a few ideas and this screenshot is a very early concept. If you’d like to follow the discussion around this, and other parts of Tide, you can join the #tide channel on the Make WordPress Slack.